- SAFe Training

- Choose a Course

- Public Training Schedule

- SAFe Certifications

- Implementing SAFe 6.0

- Leading SAFe 6.0

- SAFe 6.0 Lean Portfolio Management

- SAFe 6.0 Release Train Engineer

- SAFe 6.0 Agile Product Management

- SAFe 6.0 for Architects

- SAFe 6.0 Scrum Master

- SAFe 6.0 DevOps

- SAFe 6.0 Product Owner/Product Manager

- SAFe 6.0 for Teams

- SAFe 5.1 Advanced Scrum Master

- Agile Marketing with SAFe

- SAFe 5 for Government

- SAFe 5 Agile Software Engineering

- SAFe Micro-credentials

- Leading in the Digital Age

- Agile HR Training

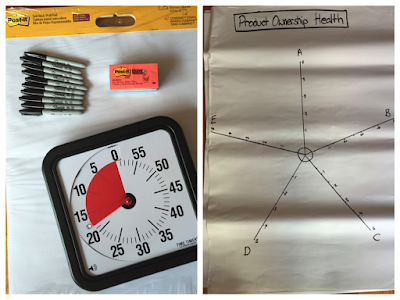

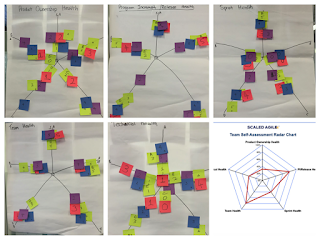

- SAFe Implementation Services

- Our Books

- Blog

- Customer Stories

- About Pretty Agile

- Resources